19th April 2023

Fred Solomon, the founder of the Scratch Players World Amateur Ranking (SPWAR), has died just a few weeks short of his 70th birthday.

The SPWAR, a labour of love for Fred from January 2007 until his passing, was widely acknowledged as the most accurate world ranking of male amateur golfers – the “gold standard” as he liked to refer to it.

Fred Solomon (r) and Myself at Los Angeles C.C. in 2017

Fred attended the University of California, Berkeley in 1974-76 obtaining a Batchelor of Science degree in Accounting and Business / Management. He played on the University’s PAC 8 golf team and remained a proud supporter of his alma mater throughout his life.

He subsequently obtained MBA’s in Finance and Federal Taxation & Law from University of Southern California (1978-9) and Golden Gate University (1977-83) respectively.

I believe he was brought up in Stockton in California and had a brother, Brian. He was clearly a very good golfer and I recall him telling me he was a seven time club champion at his local Stockton G.&C.C.

Fred enjoyed a successful career in public accounting, stockbroking and ultimately pensions but his real passion was always golf. Settled in San Francisco he became a member of The Olympic Club.

A debate in 1997 amongst golfing friends about which of two golf courses in San Francisco was the best sent Fred, who was now in his mid-40s, down a rabbit hole from which he was never to return. Most people would have tired of the discussion after a while, perhaps agreeing to disagree. However, it triggered in Fred the need to evaluate every course in Northern California and Northern Nevada and his detailed assessment led him to speak to club pros, tour pros and leading amateurs across the region.

During this period of research Fred realised that whilst numerous golf associations, bodies, federations and unions existed around the world they were all focussed on their own geographical area or player niche and that no one was providing a worldwide service targeted directly at scratch players (those with a handicap of 0.4 or lower). With the internet now becoming increasingly popular and future communication easier Fred saw an opportunity and the Scratch Players Group (SPG) was born.

The SPG was established by Fred and some of his friends as a non-profit organization on 17th February 1999. In addition to providing information to scratch golfers the group wanted to stage national level “players championships” in Northern California.

The inaugural Scratch Players Championship was first held on 3-5 November 2000 at The Ridge G.C. in Auburn, California and this proved to be the most successful of a number of events they hosted. It was staged nine times up until 2010. From 2005 it was played in August immediately ahead of the U.S. Amateur Championship at a course nearby, becoming a top 25 worldwide event in its later years. The 2010 playing at Canterwood C.C. in Gig Harbor, Washington ended in controversy when it was subsequently established that the winner Woo-Hyun Kim from South Korea, who went onto also play in the U.S. Amateur at Chambers Bay, had actually turned professional earlier in the summer. The event was never staged again.

The hosting of the SPC, with the need to create exemption categories and assess entries, led Fred to start work on an amateur ranking. The project commenced in 2002 but it was in February 2004 that he decided to formally pursue it. He compiled and tested his list, seeking feedback from various parties, in 2005 and 2006 before launching the SPWAR on the internet on 13th January 2007. The R&A had started work on their World Amateur Golf Ranking (WAGR) in 2006 and when he got wind of it’s launch in mid-January 2017 he quickly published his list on his website so that he could claim to be the first.

And so began a David and Goliath story that would run for the next 15 years. Fred, retired around this time and committed himself wholeheartedly to his ranking, determined to ensure it was the most accurate available to players, coaches and tournament organisers alike. Working in his basement office in San Francisco the effort was great but his intelligence and obsessive personality helped him rise to the challenge. Fred was never able to properly promote or monetise the SPWAR. People simply found it by accident or word of mouth and he received no reward for his work.

Fred initially linked up with GolfWeek magazine, who had been running their own U.S. amateur ranking for some time, to publicise his work and then set about gaining the buy in of the USGA. To his disappointment, but presumably not surprise, the USGA decided to endorse the WAGR at their annual meeting in February 2011. Their close relationship and The R&A’s decision to also produce a women’s ranking left them with little choice. For many this would have been an irrecoverable blow. Whilst the relationship with GolfWeek would fall away over time the situation galvanised Fred who started to work even harder on his men’s ranking, searching out more events and players of note.

Never one for tact and diplomacy Fred would regularly highlight obvious inaccuracies with strongly worded emails to the executive of the USGA and R&A as well as their respective WAGR representatives. Over time enmity was born and in recent years Fred felt the USGA discretely applied pressure to award bodies and tournament organisers in the USA to reduce their use of himself and the SPWAR. The desire of golf organisations to control the narrative is obvious nowadays. This is rarely positive and I am sure that one of the reasons the SPWAR was so good was that it was independent, never influenced by broader agendas.

Similarly correspondence with players and more often than not parents could be abrupt and direct too. Fred was not interested in long, drawn out discussions that may distract him from the SPWAR’s critical path of promptly assessing events and ranking performances.

Over the last 10 years he fell into a routine of rising in the early hours and working through to late afternoon where he would finish the day with a martini cocktail before dinner and an early night. When time allowed, primarily in the winter, he would go to the Olympic’s City gym or watch television; the Amazon Prime L.A. detective series ‘Bosch’ being his favourite. He was an accomplished skier and enjoyed a family trip to the slopes each year.

A Google search led me to his ranking in 2012 and as my own interest in amateur golf grew and I came to care about player and event rankings we became closer, corresponding frequently via email and in more recent years having a few Zoom video calls (the last one coming in January 2023). I never ceased to be amazed by his attention to detail and commitment to his work. “Everybody counts or nobody counts” is the motto Harry Bosch lives by in the programme and Fred certainly adopted this approach with the SPWAR. I often urged him to drop some of the lesser quality 36 hole events, events in the UK that I wasn’t even covering, to save him time but he wouldn’t have it and continued to send emails to event organisers all over the world in pursuit of results.

We agreed to meet up at the Walker Cup in September 2017 which was being staged at the Los Angeles C.C. Busy with the ranking he drove the 380 miles down the coast on the Friday before returning home on the Saturday night. My wife and I met him for a meal at the Hillstone restaurant on Wilshire Boulevard in Santa Monica on the Friday night and we then spent Saturday watching the golf together. It was to my knowledge one of the few occasions he got out and watched the players he followed so closely; undertaking starter duties at the South Beach International Amateur in Miami Beach being another. He was a great character and enjoyable company. He was opinionated and as a republican held strongly anti-woke views that differed from the majority of his fellow Californians.

Fred was diagnosed with cancer in the summer of 2021 and over the last 18 months has endured radiation therapy, chemotherapy and a series of blood transfusions. Updating the ranking became harder for him but he battled on and only his in-the-know followers would have noticed any difference to what had come before. How he maintained the ranking throughout the summer of 2022 I shall never know.

In view of his age and deteriorating health Fred started to investigate a sale of the SPWAR in mid-2021. A few parties came forward and some visited with him but I assume they were all over-whelmed with the commitment it required to maintain. I assume the ranking will therefore not be continued and this valuable resource will be lost to the amateur game forever.

In the last email he sent me on 1st April he told me how he was planning to travel to Hawaii for a break on 19th April if his health allowed. He finished the email as he often did: “I’m getting the blinkies so it’s time to hit the rack for a re-charge. I’ll be back in the saddle in a couple of hours.” Unusually this time he went on “By the way, I’m lucky to have the wife that I do. I cannot imagine how I would get by without her help. I’d be dead.” I didn’t think too much of it at the time but hope he found the time to convey this message to Liz in his final weeks.

Fred last updated the SPWAR on 9th April, allocating some points to Sam Bennett following his impressive showing at The Masters. As the days ticked by and events were missed it became obvious that Fred was no longer working on the ranking.

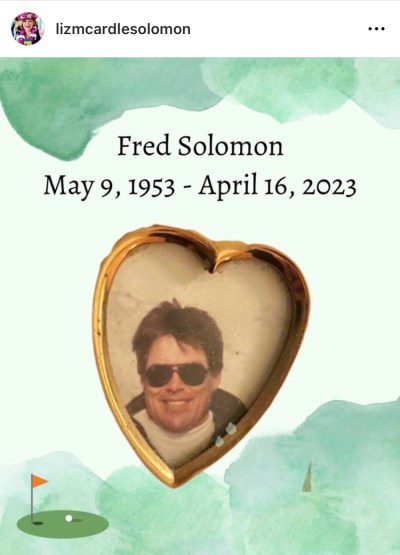

I feared the worse and eventually found the following Instagram post from his wife, Liz, who confirmed the news to family and friends on 17th April 2023.

Liz Solomon’s Instagram Account

Thanks Fred. The SPWAR was an astonishing piece of work that you and your family (long-suffering I’m sure) should be extremely proud of. No one was more knowledgeable about amateur golf and you will be greatly missed by all of those who came into contact with you over the years and valued your analysis.

I send my condolences to Liz and their two daughters Audrey and Claire who he always spoke of with huge pride.

ME.

Copyright © 2014-2025, Mark Eley. All rights reserved.